One of the easiest ways to get stuck when building a new website is to confuse improvement with progress.

You tweak the title tag. Then the meta description. Then the heading spacing. Then the alt text. Then the internal anchor text. Then maybe the homepage copy again. Everything feels productive. But nothing new is actually entering the site.

That’s the question I’ve been thinking about while building Web Idea US – Marketing Lab.

At this stage, I’m not avoiding SEO. I’m delaying deep SEO optimization on purpose.

That’s a different thing.

I’m still making sure the site is readable, indexable, structured, and usable. I’m still using clean titles, categories, featured images, excerpts, metadata, and a basic technical foundation. But I’m not trying to perfect every page before I’ve built enough content for the site to have a real semantic footprint.

And that is the experiment.

What I Wanted to Test

The core question behind this article is simple:

Does building a good base of relevant content create better early visibility signals than spending the same time trying to fully optimize a small number of pages?

My working hypothesis is yes.

Not because on-page SEO is unimportant. It absolutely matters. But because early-stage websites often need a stronger content body before search engines and AI systems can understand the site’s real thematic direction.

In other words, I’m testing whether a site with a small but growing cluster of relevant, helpful articles sends a stronger signal than a technically cleaner site with too little substance.

Why This Matters

This matters because time matters.

For a small website, or for a site being rebuilt into a more focused project, the first real constraint is not theory. It’s resource allocation.

You can spend three hours making one article 7% better.

Or you can spend those three hours publishing another meaningful page that expands the site’s topical map.

At some point, polishing becomes a form of delay.

For early organic growth, I believe search systems need enough context to answer questions like:

- What is this site really about?

- Does it consistently cover a topic?

- Is this just one isolated article, or the beginning of a real content structure?

- Is the site useful enough to test in search results?

The same logic increasingly applies to AI discovery as well.

A site with clearer topical relationships, repeated relevance, and human-useful explanations has a better chance of being understood than a site with perfect tags but weak content depth.

That is why I’m prioritizing content first.

Setup and Context

This experiment is happening on webideaus.com, an older domain that I’m actively reshaping into a public marketing lab.

The site is still early-stage in its current form. I’ve already set up the basic foundation:

- WordPress with a clear category structure

- branded design and visual system

- GTM and GA4

- Google Search Console

- basic meta setup

- clean navigation and content categories

So this is not a case of publishing into chaos.

What I’m not doing yet is full-page perfection work across every article and every page.

I’m not deeply reworking every heading hierarchy.

I’m not over-editing every meta field every few days.

I’m not spending energy on domain authority tactics right now.

I’m not acting like the site is mature when it is still building its first meaningful layer.

Instead, I’m trying to create the first 8–10 relevant articles before I shift into a heavier SEO refinement phase.

That is a conscious strategic choice.

What I Changed and What I’m Testing

The main decision I made was this:

I chose to build content breadth before deep SEO polishing.

That means:

- I’m publishing foundational articles first

- I’m aligning them under clear categories

- I’m keeping the site natural, readable, and human

- I’m not obsessing over every technical or on-page detail too early

- I’m allowing Google to start seeing a pattern before I begin tightening every page

The logic is not “publish fast no matter what.”

That would be a mistake.

The content still needs to be:

- relevant

- helpful

- natural

- readable

- strategically structured

- visually coherent

- aligned with what real humans would expect from a site like this

This is not an argument for publishing garbage and hoping indexing will save it.

It’s an argument for not over-optimizing too early at the expense of content mass and thematic clarity.

What Happened So Far

The early signals are encouraging.

After publishing my first article, I started seeing the kind of response I hoped for: not massive traffic, not dramatic results, but the right kind of early movement.

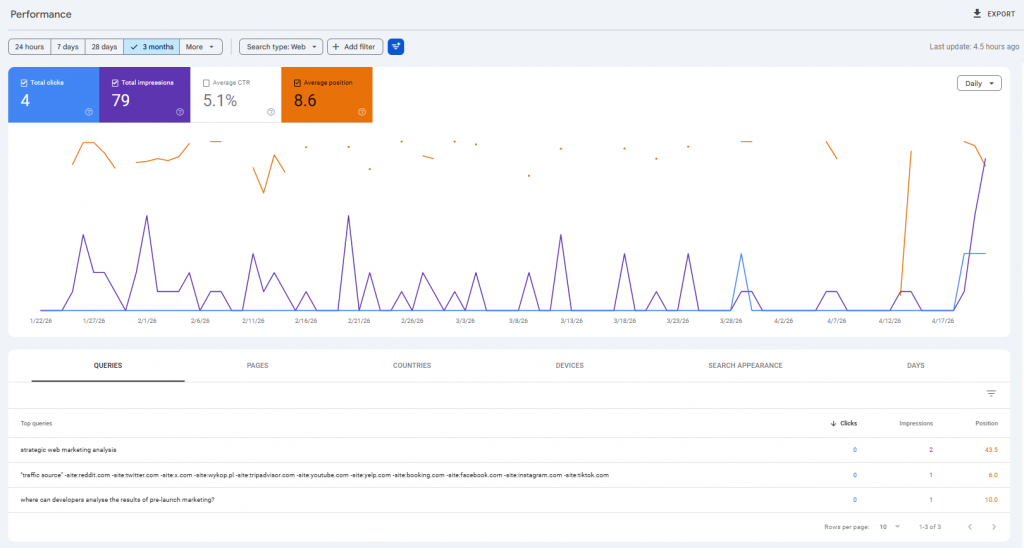

Within the first week, the article began generating impressions in Google Search Console (04/16/2026- 04/23/2026). The screenshot I reviewed showed 4 clicks, 79 impressions, a 5.1% CTR, and an average position of 8.6 over the selected period. More importantly, the page was not sitting passively in the index. Google was already testing it in search. The report also showed that the article had started appearing for actual queries, even though the site is still in a foundational phase. That matters because it suggests the page was accepted into early search evaluation rather than ignored. The query mix is still small and not perfectly aligned yet, which is normal at this stage, but the pattern itself is positive.

That matters more than the raw numbers right now.

Because at this stage, I’m not looking for scale.

I’m looking for acceptance.

Is Google finding the page?

Is it indexing the page?

Is it testing the page in search?

Is it beginning to associate the site with relevant ideas?

So far, the answer looks like yes.

And for a first article on a still-developing site, that’s exactly the kind of signal I wanted to see.

What I Think This Means

My interpretation is not that “content first always wins.”

My interpretation is more specific:

On a new or newly repositioned site, a good content base may create earlier and more useful search understanding than trying to perfect each page in isolation.

Search engines do not evaluate pages only as isolated assets. They evaluate them as part of a broader site context.

If one article exists, the system sees one article.

If several connected, relevant articles exist, the system starts seeing a topic.

That difference matters.

The first phase of growth is often not about ranking perfectly.

It is about being understood correctly.

Deep SEO optimization still matters. A lot.

But I think it matters more once there is enough material to optimize across:

- internal linking opportunities

- topic clusters

- category signals

- supporting articles

- search intent distinctions

- user navigation patterns

Without enough content, optimization has less surface area to work with.

That is why I’m not rushing into perfection.

What This Does Not Mean

This is important.

I am not saying:

- ignore SEO

- publish fast without thinking

- skip structure

- write thin content

- forget user experience

- stop caring about titles, metadata, or clarity

That would be lazy, not strategic.

The site still has to feel real.

It has to look human.

It has to be useful.

It has to make sense.

It has to match user expectations.

It has to avoid the look and texture of synthetic “SEO content.”

That limitation matters.

Because weak content does not become strong simply because you published more of it.

If the content is empty, generic, or clearly made just to occupy pages, neither Google nor AI systems are likely to reward it in a meaningful way.

So the better conclusion is this:

Content first works only if the content itself is worth indexing.

Why This Approach Also Makes Sense for AI Optimization

This experiment is not just about classic search engines.

I’m also thinking about AI/LLM optimization and broader discoverability.

AI systems increasingly rely on:

- clarity

- consistency

- thematic structure

- useful explanations

- language that sounds human

- repeated relevance across a topic cluster

A site that has one polished article and no supporting depth may look thin.

A site that begins to build a real network of related ideas has a better chance of being interpreted as a credible source environment.

That doesn’t mean volume wins.

It means structure and coverage matter.

For this stage of the project, building content helps both search visibility and AI understanding more than obsessing over perfection on page one.

What I Would Do Next

I’m not done with SEO refinement. I’m sequencing it.

My current plan is:

- Build the first 8–10 high-quality articles

- Watch indexing and query patterns in GSC

- See which topics get picked up first

- Strengthen internal linking once the content body is large enough

- Revisit titles, descriptions, headings, and topical alignment in batches

- Improve page-level SEO with more context, not less

That approach gives optimization something to work with.

It also prevents me from wasting hours over-tuning pages before the site has earned enough thematic weight to justify that effort.

So my next step is not to pause publishing.

My next step is to continue publishing strategically and let the structure start showing itself.

Final Takeaway

At this stage, I would rather build a site that search systems can begin to understand than a site that looks perfect but says too little.

That is the core of this experiment.

I’m not choosing content instead of SEO.

I’m choosing content before deep SEO optimization because I believe early visibility depends first on thematic relevance, useful structure, and enough content for the site to mean something.

And so far, the early signals suggest that this is a reasonable direction.

Not proven.

Not final.

But reasonable.

For a marketing lab, that’s enough to keep going.